The explosion of Artificial Intelligence (AI) in education is now a dominant voice. It is hailed as a revolutionary tool for personalized learning. But this rapid integration fosters a dangerous assumption: that every academic provider is a genuine expert. What happens when the human “expert” becomes a mere mouthpiece for AI, lacking real subject mastery? This blurring of roles creates systemic vulnerabilities. This isn’t a theoretical threat; it’s a financial and academic betrayal vividly captured in real parent complaints.

The Price of Deception: AI & Premium Private Tutoring

At a private tutoring company, one parent’s complaint exposed this deception:

“Shelby just had her first session and it did not go well at all… My daughter told me that he was using ChatGPT for answers to questions, and was completely unprepared to discuss the ISEE or what Shelby needs to focus on. He also had no idea how to specifically work with someone with dyslexia. We do not want to continue with him… and we would like a full refund.”

The experience of Shelby, a student needing specialized ISEE preparation and dyslexia support, spotlights this failure. Her parent paid a premium price (e.g. $80 per hour) for a tutor who was demonstrably clueless and overtly reliant on AI. This wasn’t just poor service; it was a dereliction of educational duty. When high-cost, private support fails this spectacularly, it actively undoes the foundational work of teachers and schools. This is a systemic failure because students rely on these services as a safety net. When that net is revealed as a sham (a non-expert relaying automated answers), it entirely erodes the value proposition of supplementary education.

Knowledge Erosion: The AI-Amplified Firewall Failure

The most immediate threat to the educational system is knowledge erosion. AI, while powerful, often “hallucinates” or generates plausible but incorrect information. Crucially, expert teachers and tutors are the necessary human firewall; they critically evaluate AI output. A non-expert, however, lacks the core knowledge to discern fact from flaw.

Imagine a high schooler struggling with quantum physics who receives flawed AI-generated explanations via an unqualified tutor. If the student internalizes these subtle mistakes, they build their subsequent coursework, university applications, and future studies on shaky ground. In fact, research shows that students who use AI as a crutch to find answers often score significantly worse on subsequent tests. This happens even when they felt more confident about their performance.

Beyond content errors, the impact on student confidence is profound. Experiences like Shelby’s, where the student sees the tutor as inept, breed frustration and distrust. Furthermore, students with Special Educational Needs (SEN) require tailored expertise. For them, a generic AI response is not just useless; it is actively harmful.

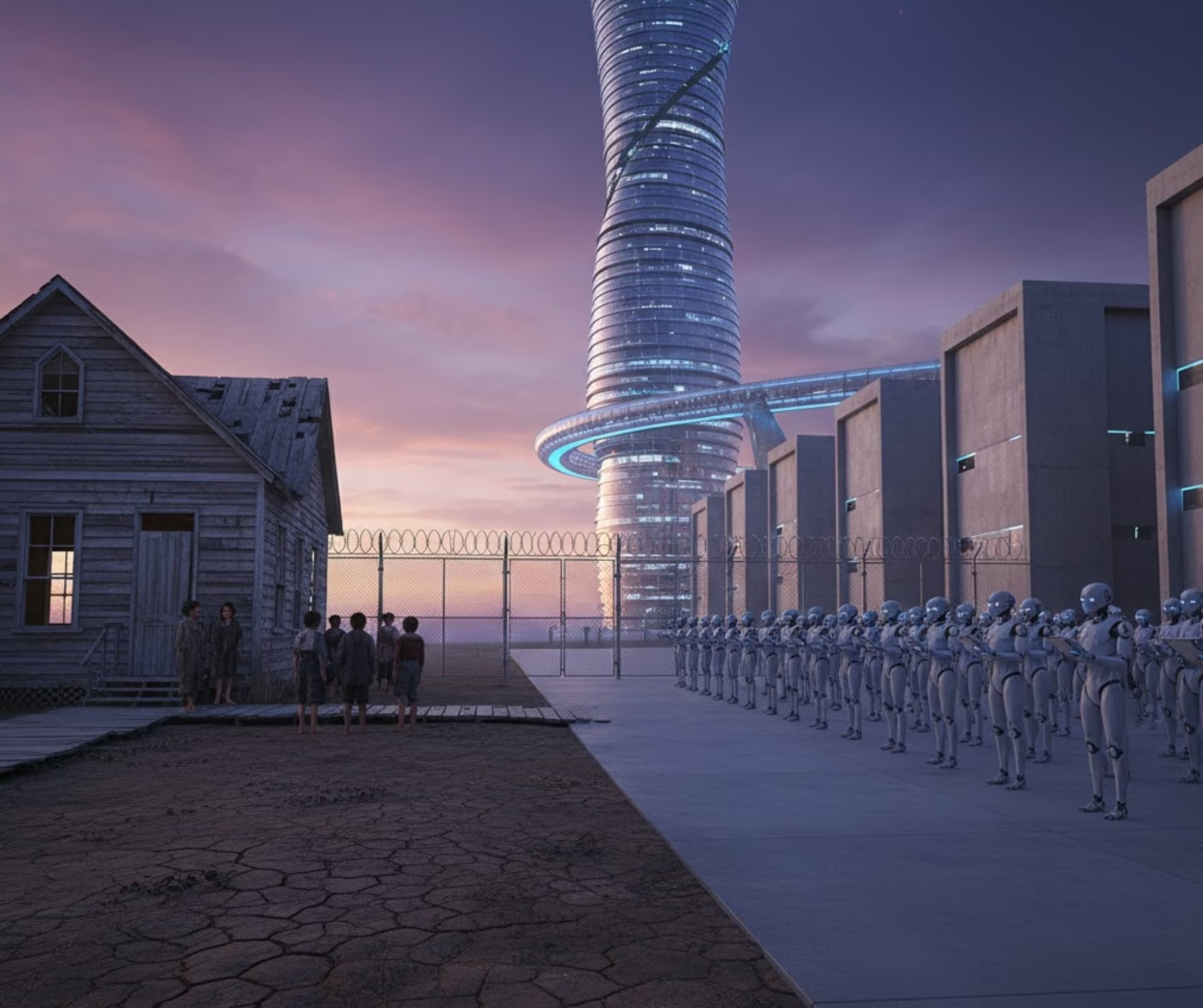

Widening Gaps: The AI-Driven Achievement Divide

Worse still, the uncritical use of AI by non-expert tutors threatens to dramatically widen the existing achievement gap. Students from less privileged backgrounds already lack access to high-quality instruction. They are the most dependent on effective, personalized tutoring to catch up. Instead, if they receive expensive, generic AI answers from unqualified people, those people effectively deprive them of the necessary expertise. They are less likely to question the information and more likely to enter college unprepared. This is the cruel paradox: a tool meant to democratize education actually contributes to greater inequity by creating a long-term financial and academic liability.

The integrity of student work is also at risk. Tutors who merely use AI to rephrase or correct assignments without teaching the student are essentially contributing to academic dishonesty. This practice inflates grades and makes transcripts unreliable for universities and employers. Ultimately, this undermines the entire meritocratic basis of the education system.

Systemic Solutions: Mandating Transparency and Expertise

The allure of AI is its scalability. However, its implementation must be guided by human integrity. AI must be a tool to enhance, not a complete replacement for, expert instruction. Tutoring companies and educational partners must implement strict standards. This requires robust vetting of subject matter expertise and ongoing professional development focused on the ethical use of AI. Tutors must be taught to apply their own discernment. The system also needs transparency.

Companies must explicitly disclose the role of AI. Regulatory bodies should consider mandatory certifications that ensure a tutor possesses genuine knowledge, particularly for high-need areas. Academic support must strengthen the educational system. When the “expert” is not an expert, and AI masks incompetence, the entire system suffers. The promise of AI requires that we wield it responsibly and transparently. Fulfillment will only come when we use it in conjunction with genuine human expertise.

For more information, reach out to Dr. Theresa Billiot.

Comments are closed